I was involved in an interesting problem in the office recently. The problem is already documented in other posts such as:

Oracle 11g JDBC driver hangs blocked by /dev/random – entropy pool empty

Oracle JDBC Intermittent Connection Issue

MOS note “Adbldxml.pl Hangs After Upgrade To 11.2.0.3 (Doc ID 1585759.1)”

Essentially the problem is that /dev/random will block when there is insuffient entropy and JDBC uses /dev/random when negotiating a connection. I’ll not go back over information from the web pages above, please have a quick read of one of them.

What I wanted to do was demonstrate the issue using a Java program from the above MOS note and show evidence of the issue and delays. All the program does is connect to a database using a JDBC thin driver and “SELECT USER FROM dual”, I’ll run it three times as below:

time java oraConn & time java oraConn & time java oraConn &

and this is the output (“NJ” is my database account name):

NJ real 0m2.001s user 0m1.093s sys 0m0.094s NJ real 0m39.051s user 0m1.163s sys 0m0.089s NJ real 0m44.316s user 0m1.162s sys 0m0.087s

2 seconds for the first connection and 39 and 44 seconds for the others! Not good. We can use “strace” to find out what the processes are doing during these delays.

strace -t -o runjv1.trc -f java oraConn & strace -t -o runjv2.trc -f java oraConn & strace -t -o runjv3.trc -f java oraConn &

This is what I see in my strace output for one of the delayed sessions, repeated timeouts, many more than displayed below:

tail -f runjv1.trc

...

2755 21:02:02 futex(0x7f1c440b1054, FUTEX_WAIT_BITSET_PRIVATE, 1, {3390, 520708960}, ffffffff) = -1 ETIMEDOUT (Connection timed out)

2755 21:02:02 futex(0x7f1c440b1028, FUTEX_WAKE_PRIVATE, 1) = 0

2755 21:02:02 futex(0x7f1c440b1054, FUTEX_WAIT_BITSET_PRIVATE, 1, {3390, 571177374}, ffffffff) = -1 ETIMEDOUT (Connection timed out)

2755 21:02:02 futex(0x7f1c440b1028, FUTEX_WAKE_PRIVATE, 1) = 0

2755 21:02:02 futex(0x7f1c440b1054, FUTEX_WAIT_BITSET_PRIVATE, 1, {3390, 621626926}, ffffffff) = -1 ETIMEDOUT (Connection timed out)

2755 21:02:02 futex(0x7f1c440b1028, FUTEX_WAKE_PRIVATE, 1) = 0

2755 21:02:02 futex(0x7f1c440b1054, FUTEX_WAIT_BITSET_PRIVATE, 1, {3390, 672072270}, ffffffff) = -1 ETIMEDOUT (Connection timed out)

2755 21:02:02 futex(0x7f1c440b1028, FUTEX_WAKE_PRIVATE, 1) = 0

2755 21:02:02 futex(0x7f1c440b1054, FUTEX_WAIT_BITSET_PRIVATE, 1, {3390, 722524322}, ffffffff) = -1 ETIMEDOUT (Connection timed out)

2755 21:02:02 futex(0x7f1c440b1028, FUTEX_WAKE_PRIVATE, 1) = 0

2755 21:02:02 futex(0x7f1c440b1054, FUTEX_WAIT_BITSET_PRIVATE, 1, {3390, 772872653}, ffffffff) = -1 ETIMEDOUT (Connection timed out)

2755 21:02:02 futex(0x7f1c440b1028, FUTEX_WAKE_PRIVATE, 1) = 0

2755 21:02:02 futex(0x7f1c440b1054, FUTEX_WAIT_BITSET_PRIVATE, 1, {3390, 823298750}, ffffffff) = -1 ETIMEDOUT (Connection timed out)

...

If I scroll up to the start of the block of timeouts I see this:

2734 21:01:30 read(15, <unfinished ...>

2755 21:01:30 <... futex resumed> ) = -1 ETIMEDOUT (Connection timed out)

2755 21:01:30 futex(0x7f1c440b1028, FUTEX_WAKE_PRIVATE, 1) = 0

2755 21:01:30 futex(0x7f1c440b1054, FUTEX_WAIT_BITSET_PRIVATE, 1, {3359, 26945244}, ffffffff) = -1 ETIMEDOUT (Connection timed out)

We see the “read” on file descriptor 15 executed by PID 2734 on line 1 above. What are we reading from when reading descriptor 15? Further up the trace file we see:

2734 21:01:30 open("/dev/random", O_RDONLY) = 15

2734 21:01:30 fstat(15, {st_mode=S_IFCHR|0666, st_rdev=makedev(1, 8), ...}) = 0

2734 21:01:30 fcntl(15, F_GETFD) = 0

2734 21:01:30 fcntl(15, F_SETFD, FD_CLOEXEC) = 0

“/dev/random”! Nice, this agrees with the referenced posts.

We can monitor the available entropy by running this shell snippet during a re-test:

while [ 1 ];do date cat /proc/sys/kernel/random/entropy_avail sleep 1 done

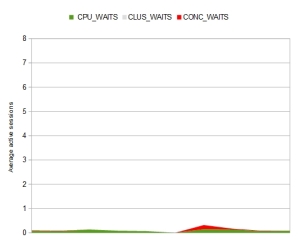

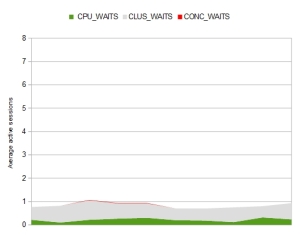

And this is what we see, the available entropy drops causing reads on /dev/random to block. There are a few solutions in the referenced blog and MOS notes.

Wed Aug 19 21:28:07 BST 2015 161 Wed Aug 19 21:28:08 BST 2015 174 Wed Aug 19 21:28:09 BST 2015 134 Wed Aug 19 21:28:10 BST 2015 38 Wed Aug 19 21:28:11 BST 2015 50 Wed Aug 19 21:28:13 BST 2015 61 Wed Aug 19 21:28:14 BST 2015 62 Wed Aug 19 21:28:15 BST 2015 8 Wed Aug 19 21:28:16 BST 2015 21 Wed Aug 19 21:28:17 BST 2015 22 Wed Aug 19 21:28:18 BST 2015 35 Wed Aug 19 21:28:19 BST 2015 37 Wed Aug 19 21:28:20 BST 2015 49 Wed Aug 19 21:28:21 BST 2015 63 Wed Aug 19 21:28:22 BST 2015 0 Wed Aug 19 21:28:23 BST 2015 1 Wed Aug 19 21:28:24 BST 2015 17 Wed Aug 19 21:28:25 BST 2015 36 Wed Aug 19 21:28:26 BST 2015 37

I fully appreciate I am going over work covered by others but I thought the “strace” analysis added to other posts. Also it doesn’t hurt to highlight this issue again as I’m really surprised this is not more widely known as the use of virtual machines is so prevalent these days. I presume connection pools hide this from us most of the time.